Data Engineering Weekly #154

The Weekly Data Engineering Newsletter

RudderStack is the Warehouse Native CDP, built to help data teams deliver value across the entire data activation lifecycle, from collection to unification and activation. Visit rudderstack.com to learn more.

Sanjeev Mohan: Unveiling the Crystal Ball: 2024 Data and AI Trends

Sanjeev & Rajesh, as usual, share their excellent observations about data & AI industry trends. I love the rising, stable, and declining format for categorizing data engineering trends. My take on the rising trends

🟡 Intelligent Data Platform: Yes, I fully agree. We barely starting to scratch the surface of its possibility. However, I’m less optimistic about the “multi-engine” orchestrator part.

🔴AI Agents: This is not possible until the data tools figure out a way to integrate into their workflow to use AI Agent instead of relying on users to enter the prompts. No 1 rule of the product experience is “Don’t make the user think”; for me, “prompting” makes me think a lot.

🟢 Personalized AI Stack: Yes, I fully agree

🟢 AI Governance: Yes, I fully agree

On the Declining side, my thoughts are shared in a simple LinkedIn post here.

https://sanjmo.medium.com/unveiling-the-crystal-ball-2024-data-and-ai-trends-74164da31cf8

DoorDash: Privacy Engineering at DoorDash Drive

DoorDash writes about its architecture and policy to protect user privacy at scale. The technique for geomasking is an excellent read.

https://doordash.engineering/2023/11/14/privacy-engineering-at-doordash-drive/

Richa Verma: Rollout Roulette - Why should Data PMs care about Feature Rollout

rollout is much more than turning on/off a feature flag. Your rollout can make/break the core experience of your product without showing much visual change. The author explores why data PMs have to be more cautious in planning and executing a rollout.

https://thedataproductmanager.substack.com/p/rollout-roulette-why-should-data

Mercado Libre: How do we structure a data team here at Mercado Libre?

Is it centralized or Distributed? Which data team org structure works very best for a company? I believe there is no one answer to it; the author explores the various organization model and their adoption of Mercado Libre.

Sponsored: RudderStack launches Trino Reverse ETL source

The integration supports warehouse-based diffing, making it the most performant Reverse ETL solution for Trino.

RudderStack just launched Trino as a Reverse ETL source. It's the only Trino Reverse ETL solution that supports warehouse-based CDC. With Rudderstack and Trino, you can also create custom SQL queries for building data models — execute these using Trino via RudderStack and seamlessly deliver your data to your downstream business tools. Read the announcement for more details.

https://www.rudderstack.com/blog/feature-launch-trino-reverse-etl-source/

Google: Advancements in machine learning for machine learning

Google writes about exciting advancements in ML for ML. The blog explores how Google uses ML to improve the efficiency of ML workloads!

https://blog.research.google/2023/12/advancements-in-machine-learning-for.html

Meta: AI debugging at Meta with HawkEye

Meta writes about HawkEye, a toolkit for monitoring and debugging machine learning workflows, enhancing the efficiency of resolving production issues. It features advanced algorithms for isolating model-related problems, diagnosing prediction anomalies, and identifying training data issues. The tool aims to streamline debugging processes and is evolving to support broader community applications.

https://engineering.fb.com/2023/12/19/data-infrastructure/hawkeye-ai-debugging-meta/

Instacart: One model to serve them all

Instacart Ads writes about its Unified Browse pCTR model using Deep Learning to improve ad relevance and performance across browsing surfaces. The model replaced multiple legacy XGBoost models, addressing limitations like disparate training datasets and maintenance complexity. The unified model, leveraging high-cardinality features and deep learning frameworks, significantly improved metrics like AUC-PR and AUC-ROC, enhancing user profiling and ad performance.

https://tech.instacart.com/one-model-to-serve-them-all-0eb6bf60b00d

LinkedIn: Deployment of Exabyte-Backed Big Data Components

LinkedIn operates one of the largest Apache Hadoop clusters, facing challenges in deploying code changes due to its scale. LinkedIn developed a Rolling Upgrade (RU) framework, enabling smooth deployments across over 55,000 hosts and 20 clusters with minimal downtime. This framework automates and monitors deployments, significantly reducing manual intervention and achieving over 99% success rates in upgrades, enhancing the reliability and efficiency of their big data infrastructure.

https://engineering.linkedin.com/blog/2023/deployment-of-exabyte-backed-big-data-components

Expedia: Explore near Real-time Streaming Data Using a Web UI

Expedia writes about its journey to build streaming high-volume data over WebSockets. Using a single WebSocket handler, Expedia struggled with latency and scalability when handling large Kafka topics. To address this, Expedia developed a more efficient system separating WebSocket session handling from data filtering. Expedia writes about how it utilized Kafka for data distribution, Postgres for event-driven cache updates, and Kubernetes for scalable deployment.

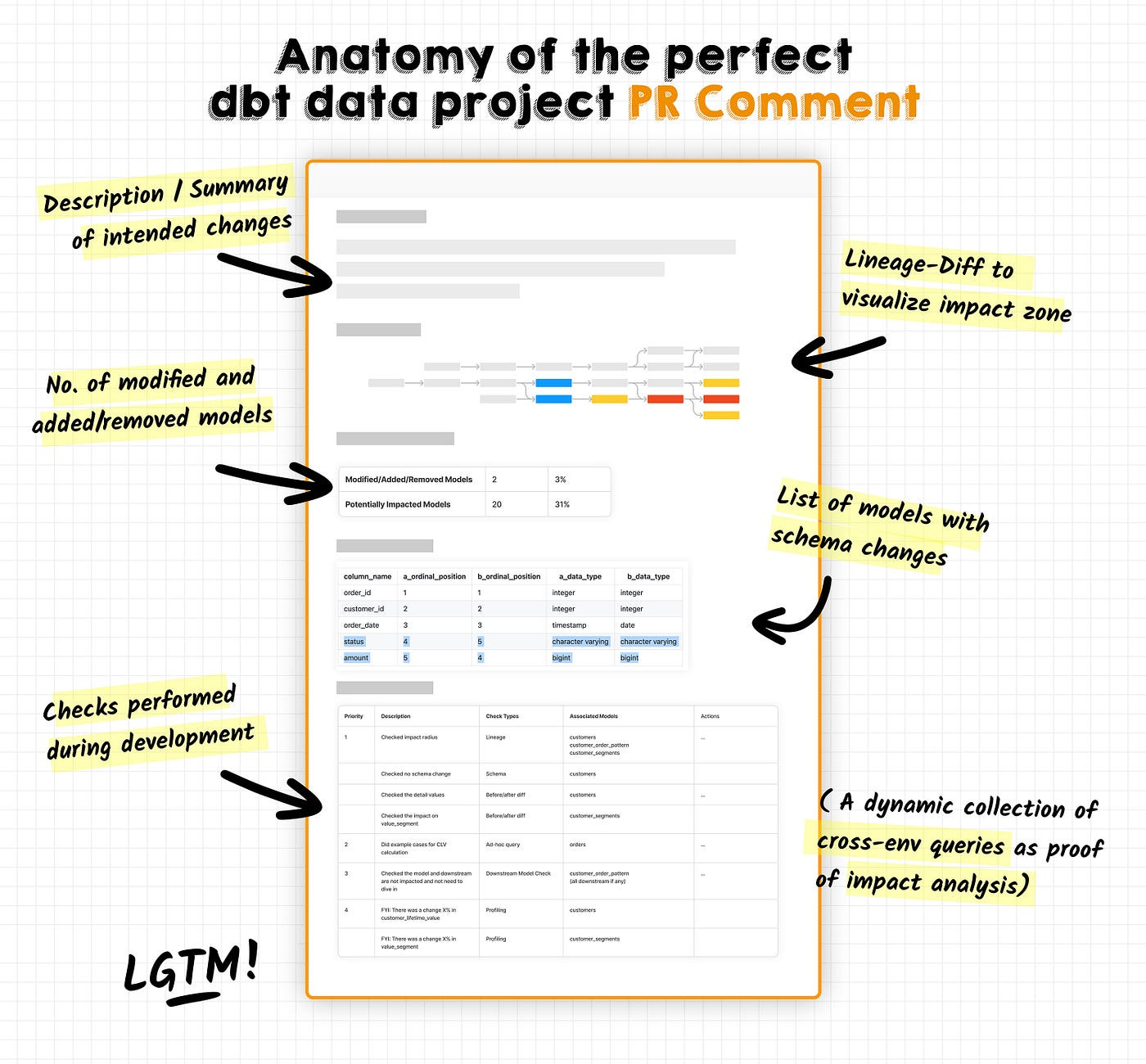

Dave Flynn: The anatomy of a perfect pull-request comment for dbt data projects

Should we structure the pull requests? Hell yes. How can we build the same for dbt projects?

The author explores what would be a perfect PR template for a dbt project.

All rights reserved ProtoGrowth Inc, India. I have provided links for informational purposes and do not suggest endorsement. All views expressed in this newsletter are my own and do not represent current, former, or future employers’ opinions.