Data Engineering Weekly #174

The Weekly Data Engineering Newsletter

Data Engineering Weekly is sponsored by Astronomer—Enterprise-Grade Apache Airflow. Deliver data on time with the speed and scale your application demands.

AI Verify Foundation: Model AI Governance Framework for Generative AI

Several countries are working on building governance rules for Gen AI. Data sovereignty will play a vital role as countries formulate regulations. The AI Verify Foundation, a not-for-profit Foundation, a wholly-owned subsidiary of the Info-communications Media Development Authority of Singapore (IMDA), publishes its Model AI Governance Framework for Gen AI. The key highlights focus on

Accountability

Data Quality & Governance

Transparency in the development and deployment of AI models

A structured approach to incident reporting and third-party testing

AI for Public Good

O'Reilly: What We Learned from a Year of Building with LLMs

The article overviews lessons learned from building an app with LLM and the best practices to follow. A few highlights

Prompting Techniques: Effective prompting is crucial. Techniques like in-context learning, chain-of-thought, and providing relevant resources can significantly improve LLM performance.

Retrieval-Augmented Generation (RAG): RAG effectively incorporates new information into LLMs, improving output quality by grounding responses in relevant documents. It is often preferable to fine-tuning due to the ease of updating and better handling of new knowledge.

Workflow Optimization: Decomposing complex tasks into smaller, manageable steps and prioritizing deterministic workflows can enhance the reliability and performance of LLM-based systems.

https://www.oreilly.com/radar/what-we-learned-from-a-year-of-building-with-llms-part-i/

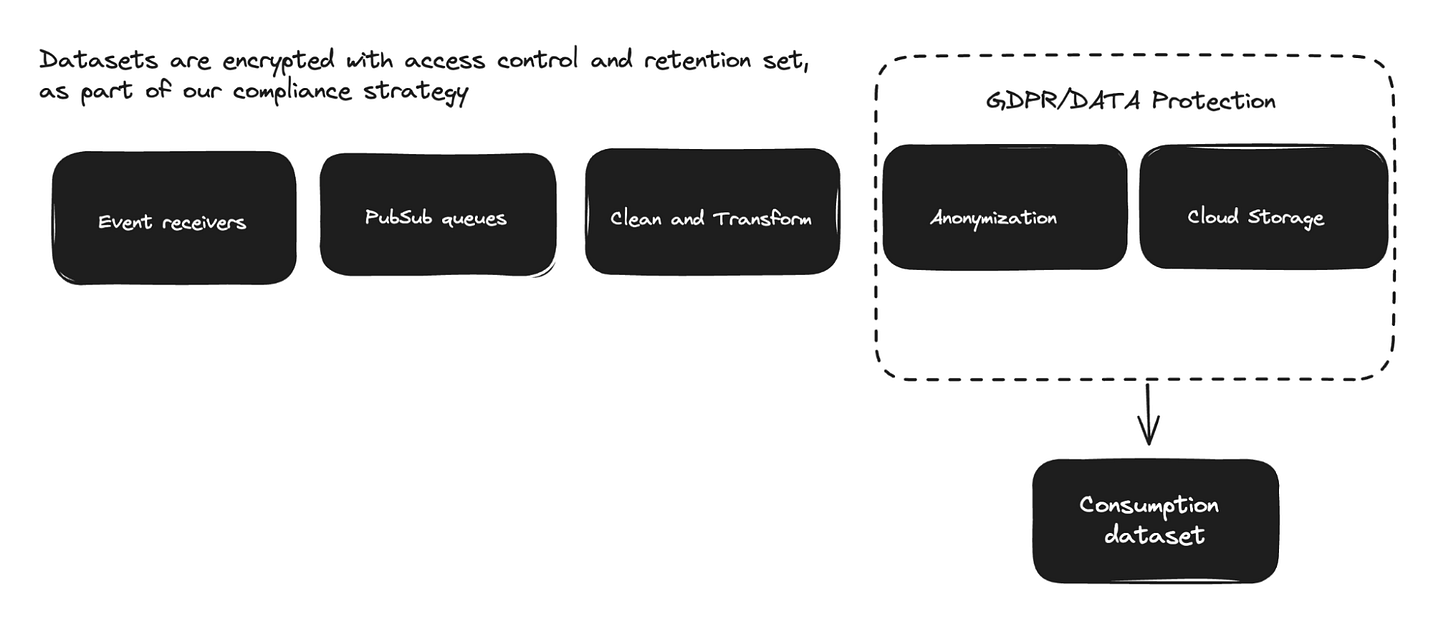

Solmaz Shahalizadeh: How to get more out of your startup’s data strategy

Data is always an afterthought in many organizations. A founder starts with a vision and drives the product roadmap. Data plays a critical role in helping the founders ’s vision to iterate faster and grow the business. How should one think about a data strategy if you’re a startup? The author highlights the structured approach to building data infrastructure, data management, and metrics.

https://www.bvp.com/atlas/how-to-get-more-out-of-your-startups-data-strategy

Sponsored: DoubleCloud - More than just ClickHouse

ClickHouse is the fastest, most resource-efficient OLAP database, which queries billions of rows in milliseconds and is trusted by thousands of companies for real-time analytics. Run ClickHouse anywhere and enjoy up to 100x faster performance compared to other DBMSs while DoubleCloud takes care of the maintenance providing 24/7 monitoring along the way. Start a free trial and see just how easy it is to get ClickHouse’s incredible speed for real-time analytics at scale!

https://double.cloud/services/managed-clickhouse/

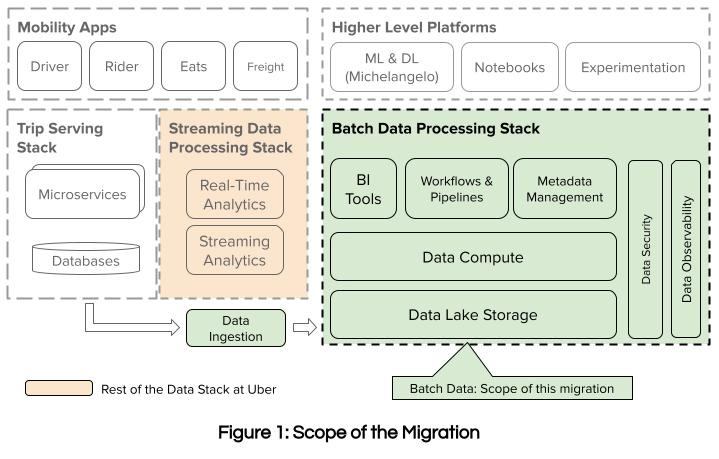

Uber: Modernizing Uber’s Batch Data Infrastructure with Google Cloud Platform

Uber is one of the largest Hadoop installations, with exabytes of data. Uber writes about its decision to move from on-prem batch data infrastructure to GCP. Uber detailed the migration strategy as a typical

Lift & Ship the same copy to minimize the disruption

Deprecate in-house solutions slowly in favor of cloud solutions as a continuous refactoring of the stack.

https://www.uber.com/blog/modernizing-ubers-data-infrastructure-with-gcp/

Meta: Forecasting@Meta - Balancing Art and Science

The article discusses how Meta employs a hybrid approach to improve the accuracy of its forecasts by integrating traditional statistical methods with advanced machine learning techniques. Meta combining different forecasting methods, leveraging ensemble learning and transfer learning principles. This approach allows Meta to achieve better adaptability and accuracy in predicting future trends by harnessing the strengths of various models and techniques

https://medium.com/@AnalyticsAtMeta/forecasting-meta-balancing-art-and-science-92526e1ae36c

Sponsored: How to Use Airflow to Monitor and Optimize Snowflake Usage

Managing mass amounts of data efficiently is key in today’s data-driven economy. Grindr knows this first hand, and set out to balance Snowflake usage with cost optimization. The resulting solution was SnowPatrol, an OSS app that alerts on anomalous Snowflake usage, powered by ML Airflow pipelines. Register for this webinar to get a behind the scenes look at how to implement this next-gen monitoring to proactively identify abnormal Snowflake usage.

Yaroslav Tkachenko: My Biggest Issue With Apache Flink - State Is a Black Box

State Management is a vital part of stream processing, and the author points out how painful it is to build and audit the state in the Flink application. TIL that the queryable state is deprecated, which surprises me too. The author highlights the new state reader in Spark Streaming and emphasizes the need to improve the observability and developer-friendliness of Flink state management.

https://streamingdata.substack.com/p/my-biggest-issue-with-apache-flink

Spotify: Data Platform Explained Part II

Spotify writes the second part of building a data platform at Spotify and talks about scalability, the tooling we use and provide, and the value each building block brings to a data platform. By investing in tooling and documentation and fostering a strong community, Spotify ensures its data platform evolves to meet its ever-growing business demands.

https://engineering.atspotify.com/2024/05/data-platform-explained-part-ii/

Devoted Health: Building a Custom Static Analysis Tool For Looker

Devoted Health writes about the performance issues with Looker’s built-in LookML Validator, which checks the syntax, consistency, and enforcement of best practices. The performance issue impacts the users' productivity, and the blog explains how the data team built a custom LookML validator integrated with the IDE to improve its performance.

https://tech.devoted.com/building-a-custom-static-analysis-tool-for-looker-914f44b74c14

Adevinta: How we moved from local scripts and spreadsheets shared by email to Data Products

Data Product Thinking Shaping the data management to build a reliable, customer-centric data application. The tooling around supporting data products is on the rise. Adevinta writes about its data-building process using local scripts → spreadsheet emails, → data products. The blog highlights the critical factors for data products' success: standardization of producing data assets, uniform CI/ CD process, and standard testing methodologies.

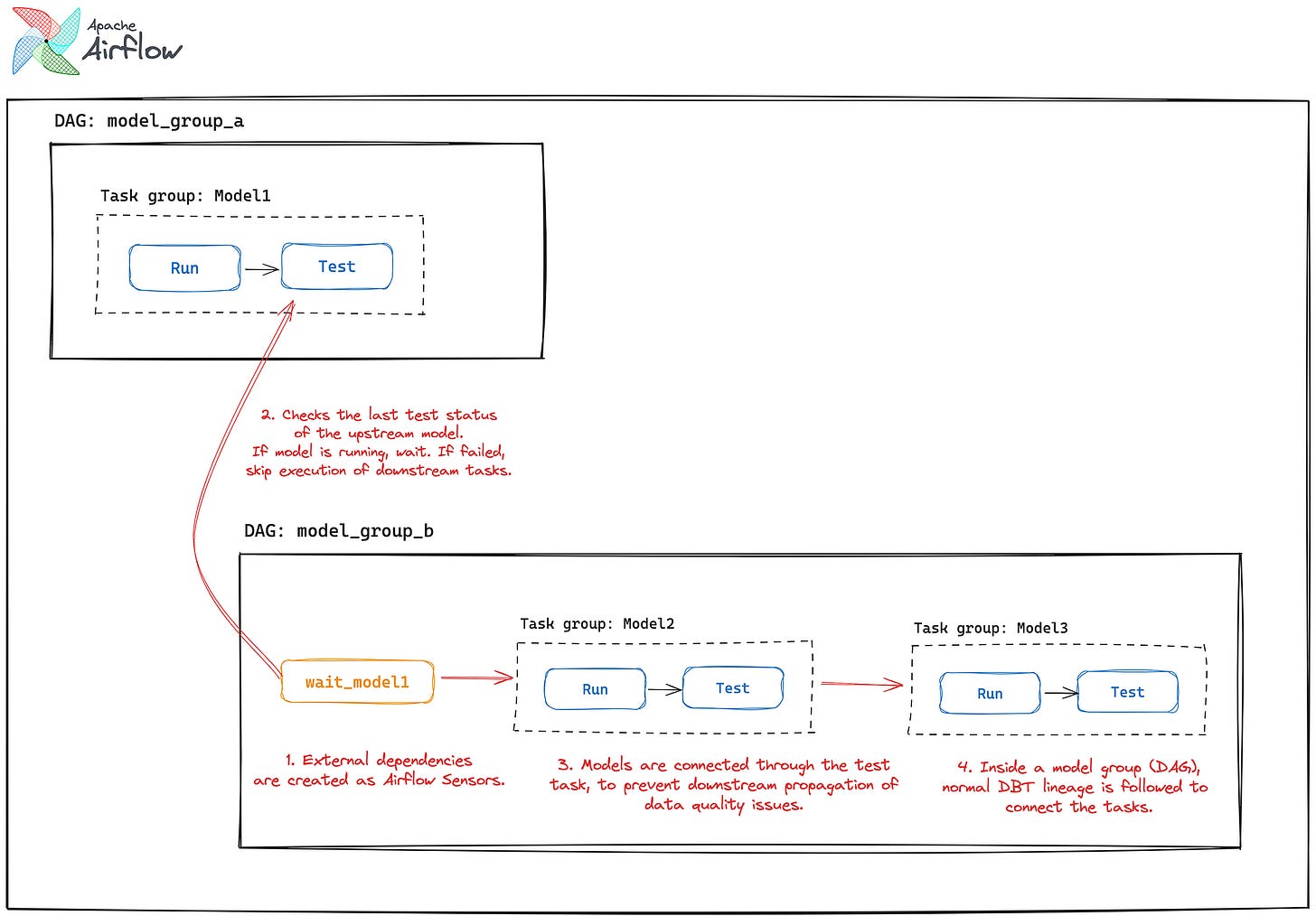

Alexandre Magno Lima Martins: How we orchestrate 2000+ DBT models in Apache Airflow

Integrating Airflow with dbt has its challenges. Should we adopt one DAG to execute all dbt models or one DAG per dbt model? Both are suboptimal. The author highlights adopting a “model grouping” approach to pack all the relevant dbt models into one DAG group to provide better isolation.

https://medium.com/apache-airflow/how-we-orchestrate-2000-dbt-models-in-apache-airflow-90901504032d

All rights reserved ProtoGrowth Inc, India. I have provided links for informational purposes and do not suggest endorsement. All views expressed in this newsletter are my own and do not represent current, former, or future employer” opinions.